- #Steps to download and install apache spark how to#

- #Steps to download and install apache spark full#

- #Steps to download and install apache spark mac#

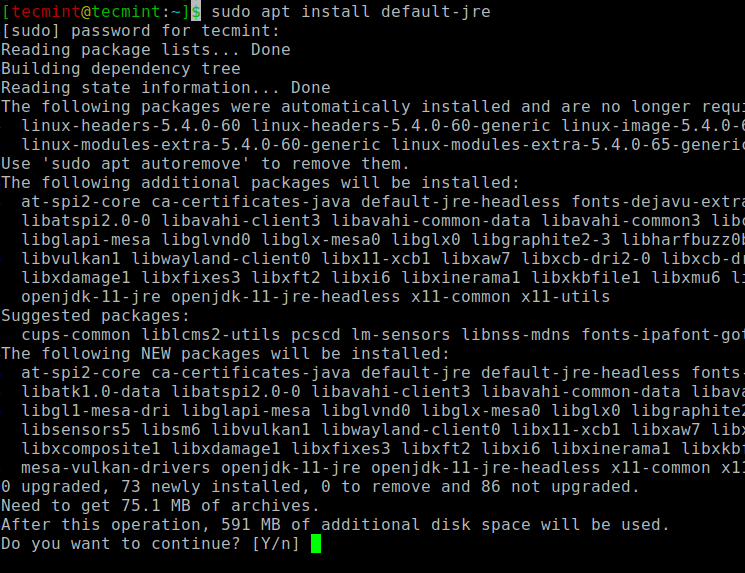

The below error is also related to the Native Hadoop Binaries for Windows OS.ġ6/04/03 19:59:10 ERROR util.Shell: Failed to locate the winutils binary in the You can build one by following my previous article or download one from. This is because your system does not have native Hadoop binaries for Windows OS. using builtin-java classes where applicableġ6/04/02 19:59:31 ERROR Shell: Failed to locate the winutils binary in the hadoopīinary path java.io.IOException: Could not locate executable null\bin\winutils.exe Many of you may have tried running spark on Windows and might have faced the following error while running your project:ġ6/04/02 19:59:31 WARN NativeCodeLoader: Unable to load native-hadoop library for

#Steps to download and install apache spark full#

Every project will share a common metastore and warehouse.Multi Project Access (Multi Project Single Connection).Only one Spark SQL project can run or execute at a time.Databases and Tables created by one project will not be accessible by other projects.Every project will have its own metastore and warehouse.Single Project Access (Single Project Single Connection).You can follow any of the three modes depending on your specific use-case. I have divided this article into three parts. What to ExpectĪt the end of this article, you should be able to create/run your Spark SQL projects and spark-shell on Windows OS. You can refer to the Scala project used in this article from GitHub here. Just make sure you'll downloading the correct OS-version from Spark's website.

#Steps to download and install apache spark mac#

This article can also be used for setting up a Spark development environment on Mac or Linux as well. īy default, Spark SQL projects do not run on Windows OS and require us to perform some basic setup first that’s all we are going to discuss in this article, as I didn’t find it well documented anywhere over the internet or in books. It integrates easily with HIVE and HDFS and provides a seamless experience of parallel data processing. It provides implicit data parallelism and default fault tolerance. Now, this article is all about configuring a local development environment for Apache Spark on Windows OS.Īpache Spark is the most popular cluster computing technology, designed for fast and reliable computation.

#Steps to download and install apache spark how to#

In my last article, I have covered how to set up and use Hadoop on Windows.